TL;DR: Quick answer: Which is more accurate?

AI transcription often wins on verbatim accuracy for clear audio and routine meetings, while humans win on judgment, context, and decisions. In short, AI vs human note-taking breaks down like this: machines capture words fast and searchable, people capture intent and action items.

When AI alone is good enough: recorded standups, product demos, or lectures with clear speech and few speakers. Those use cases need speed, search, and reuse more than interpretive judgment. When to keep a human: interviews, legal or medical notes, negotiations, and meetings with fuzzy responsibility, where understanding nuance matters and liability exists.

Best single approach: a hybrid workflow where AI produces a full transcript and draft summary, and a human reviewer quickly validates the summary, tags decisions, and confirms action items. This combo delivers near-real-time capture, lower cost than full human transcription, and much better decision accuracy than AI alone.

Bottom line: use AI for scale and speed, rely on humans for judgment, and combine them when accuracy and accountability matter most.

Why accuracy in note-taking matters (decisions, liability, memory, reuse)

Clear, accurate notes change work outcomes. Meeting-heavy teams rely on notes to decide, assign tasks, and prove what happened. When notes miss details, decisions get delayed or reversed. That waste compounds across projects and stakeholders.

Four high-stakes outcomes affected by note accuracy

Good notes speed decisions. They make follow-ups exact and measurable. They reduce rework and help teams move faster. They also protect people and institutions from misunderstandings.

- Decisions: A missing number or condition can change a product choice. Short errors create long delays. Small omissions multiply across dependent tasks.

- Follow-ups: Incomplete action items create dropped work. Teams spend time chasing clarifications. That costs hours and morale.

- Legal risk: Bad records raise liability and compliance exposure. Courts treat business records seriously in disputes.

- Institutional memory: Accurate notes store what teams learned. That knowledge saves onboarding time and prevents repeating mistakes.

Under the Federal Rules of Evidence, a memorandum, report, record, or data compilation, in any form, is admissible if made at or near the time by, or from information transmitted by, a person with knowledge, and kept in the course of a regularly conducted business activity, as stated by Federal Rules of Evidence Rule 803(6). That legal standard means note quality can affect investigations. If notes are incomplete, teams face higher risk in audits or disputes.

Accuracy also enables reuse. Clean transcripts and summaries feed research, templates, and a searchable knowledge base. That reuse shrinks prep time for future meetings and raises institutional IQ. Investing in reliable capture pays back through faster action, clearer responsibility, and lower legal exposure.

How AI note-taking works — tech and typical failure modes (with TicNote Cloud features)

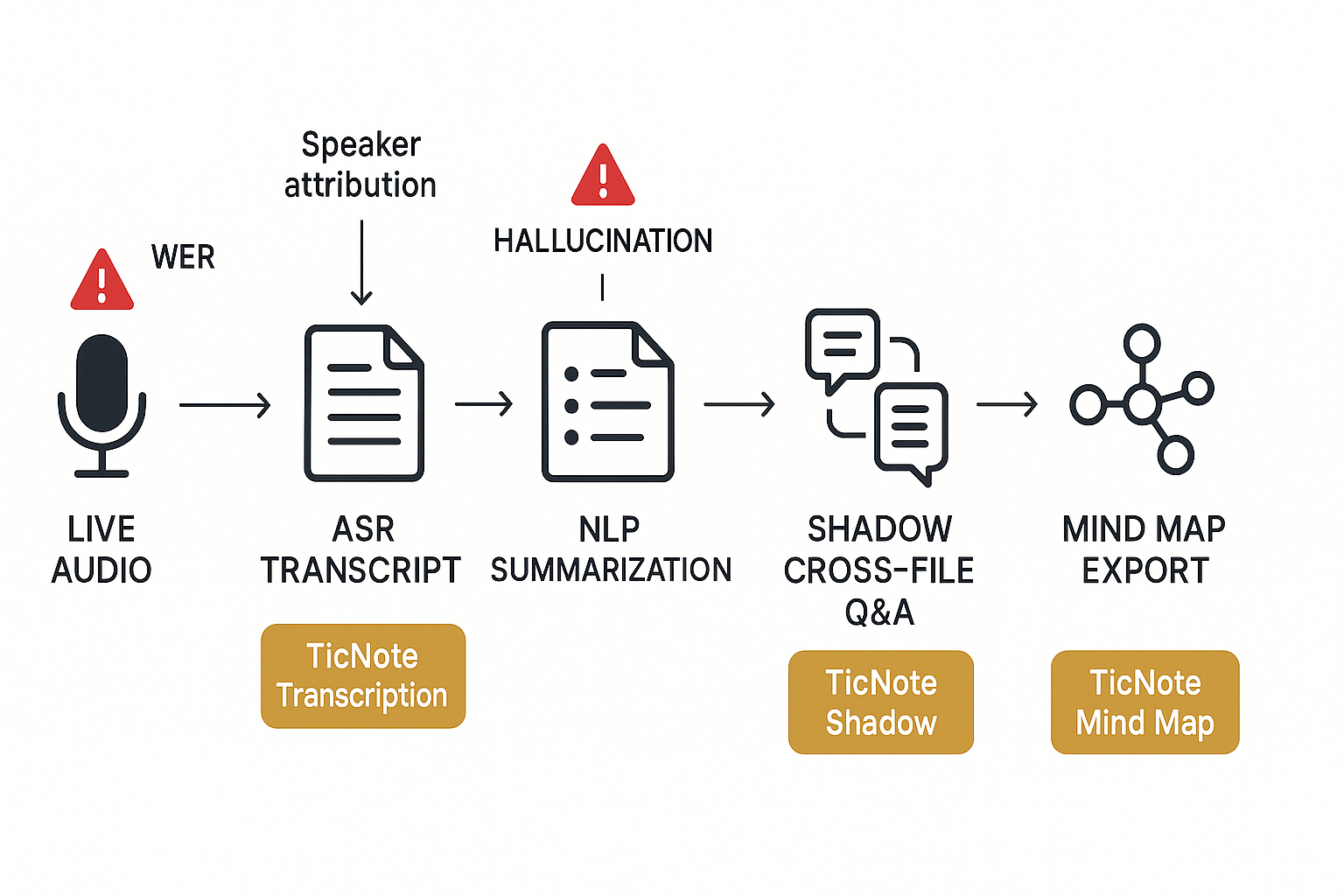

AI note-taking pipelines turn meeting audio into searchable knowledge. For the AI vs human note-taking debate, the pipeline matters: speech-to-text, topic extraction, and grounding shape what people read later. Below I explain the core tech and the common failure points, and show how TicNote Cloud tools plug the gaps.

Core tech: audio to actions

First, automatic speech recognition (ASR) converts audio to raw text. Then natural language processing (NLP) groups topics, extracts decisions, and generates summaries (short, structured notes). Grounding tools link transcript lines back to source audio or files so answers stay verifiable.

Typical failure modes and where they happen

- Word error rate (WER) and mis-transcription. ASR can drop or alter words, especially names and numbers. According to Hyper-BTS Dataset: Scalability and Enhanced Analysis of Back TranScription (BTS) for ASR Post-Processing (2024), that dataset is approximately five times larger than previous datasets, addressing many ASR error categories. Larger training sets reduce some errors, but edge cases remain.

- Speaker mis-attribution. Models often assign the wrong speaker label when voices overlap or the recording is distant.

- Summarization errors and hallucination (when the model invents facts). Summaries can omit context, merge items incorrectly, or propose actions that never happened.

- Missed action items and context loss across meetings. Single-transcript summaries miss cross-meeting decisions.

How TicNote Cloud reduces risk

- High-accuracy ASR: TicNote’s transcription aims for near-98% accuracy on clear recordings, lowering WER before downstream steps.

- Speaker clarity and audio capture: multi-source upload and device capture improve speaker distinction.

- Shadow cross-file Q&A: Shadow grounds answers to transcript lines and files, so you can verify claims and cut hallucination risk.

- Mind map exports: visual maps surface topics and decisions, making missed action items easy to spot.

- Best practice: run a quick reproducible test comparing human notes to TicNote transcripts, then use Shadow to validate ambiguous points.

Try TicNote Cloud free today and test transcription accuracy for your team

Head-to-head accuracy comparison: AI vs human note-takers (metrics & studies)

Accuracy isn’t one number. It’s a set of measures you must pick before you compare AI vs human note-taking. Use clear, repeatable metrics so results mean something across tools and teams.

Pick four practical metrics

Use these four to compare systems and workflows:

| Metric | What it measures | How to test (quick) |

| Word error rate (WER) | Word-level transcription errors as a percent | Compare model transcript to a verbatim ground truth transcript, compute WER (%) |

| Factual recall | Percent of factual statements captured correctly | Blind review: count factual items in ground truth and matches in notes |

| Action-item capture | Percent of tasks, owners, deadlines recorded | Count action items in ground truth, check presence and correctness in notes |

| Time-to-action | Seconds or hours from meeting end to a completed first follow-up | Measure timestamp of meeting end and first task update or completed step |

What peer-reviewed and industry evidence says

Benchmarks vary by setting. For controlled transcription tasks, human transcriptionists can be extremely accurate. In one benchmark, Comparison of Voice-Automated Transcription and Human Transcription in Generating Pathology Reports (2003) found human transcriptionists achieved a mean accuracy rate of 99.6%, while voice-recognition software achieved 93.6%. That shows humans excel in verbatim capture in controlled, single‑speaker tasks. But live meetings are messier: multiple speakers, crosstalk, poor audio, and summarizing goals all lower human capture of raw facts and actions.

How to read TicNote’s up-to-98% claim

TicNote’s up-to-98% refers to high-quality audio transcription accuracy, roughly equivalent to a WER of about 2% under good conditions. That’s a fast, reproducible benchmark for raw text capture. But don’t conflate transcript WER with meeting comprehension. A near-perfect transcript still needs topic grouping, decision extraction, and context linking to become usable notes.

For live meetings, expect a split outcome: automated transcripts often beat distracted human notetakers on verbatim recall, while humans may still outperform AI at interpreting nuance and prioritizing action items. The smart test is hybrid: measure both transcript WER and end-to-end outcomes like factual recall and action-item capture in the same experiment.

Use these steps in your evaluation: record the same meeting, produce a TicNote transcript and human notes, then compute WER and count factual and action-item matches. That gives you a practical, targetable view of strengths and risks.

Common error types, root causes, and risk mitigation strategies

When teams compare AI vs human note-taking, common errors cluster into predictable buckets. This section lists the typical mistakes, explains why they happen, and gives practical fixes teams can use today. Keep these simple checks in your meeting workflow and you’ll cut risk fast.

Transcription mistakes

Speech gets garbled when audio is noisy, speakers overlap, or accents vary. AI transcription (and humans) miss words for the same reasons. Mitigation:

- Use a brief pre-meeting checklist: ask participants to mute when not speaking and use headsets.

- Prefer direct-record capture or high-quality uploads over phone audio.

- Add a human spot-checker to review flagged low-confidence segments.

Summarization bias

Summaries can skew toward the speaker who talks most, or the model’s training patterns. That hides minority views and risks bad decisions. Mitigation:

- Pick a template that forces sections: decisions, open questions, owners, and evidence.

- Require a reviewer to confirm that all viewpoints appear in the summary.

- Use cross-file Q&A to surface dissenting notes from other meetings.

Missed context

Notes stripped of context lose why a decision happened. Missing context causes rework and liability. Mitigation:

- Tag notes with meeting purpose, agenda, and key documents.

- Capture short verbatim quotes for critical claims.

- Link to source files or timestamped transcript clips.

Misattributed decisions

Attributing tasks or approvals to the wrong person breeds confusion. It usually stems from poor speaker labeling and rushed summaries. Mitigation:

- Assign a role to verify actions after each meeting: scribe, verifier, owner.

- Use speaker confirmation at the meeting end: “Do you accept this action?”

- Keep a human-in-the-loop approval step before publishing final notes.

Quick mitigation checklist:

- Use templates for structure.

- Add role-based verification.

- Insert a short human review for high-risk items.

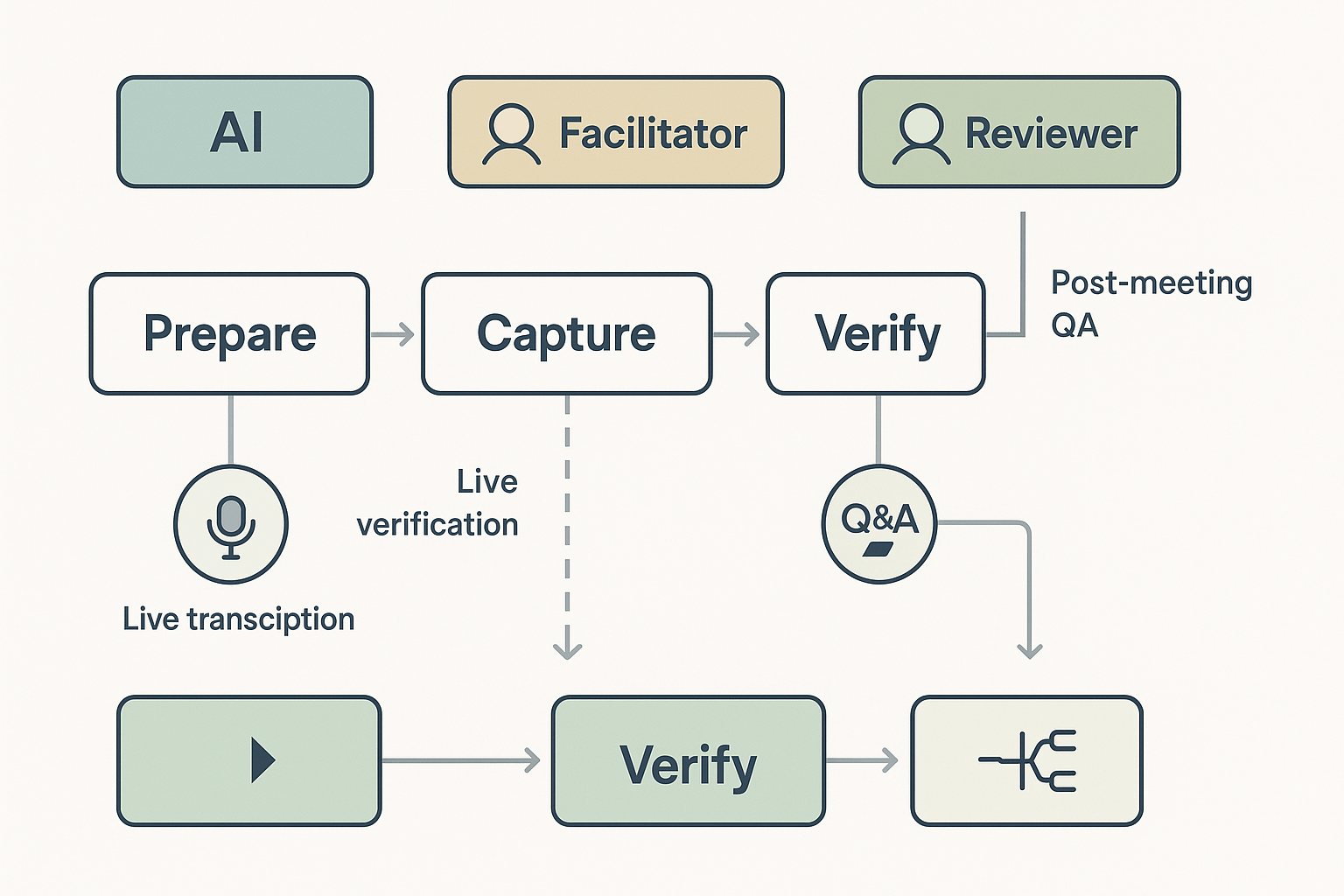

Hybrid workflows let AI do the heavy lifting, and humans add judgment and context. Start with a clear role split, use AI to capture everything, then have a facilitator verify key points. This hybrid approach improves speed, reduces missed actions, and keeps legal or context-sensitive choices under human control. It’s a practical answer to AI vs human note-taking for meeting-heavy teams.

Pattern 1: AI-first draft, human quality assurance

Let AI capture and draft summaries, then let a person verify and finalize. Steps:

- Capture: Turn on TicNote Cloud live transcription during the meeting. AI creates a transcript and draft summary.

- Verify: A note owner reviews the draft within 24 hours, fixes errors, and confirms decisions.

- Publish: Export the final summary and action list to shared tools. Benefits: you get fast, searchable notes and fewer missed items.

Pattern 2: Facilitator verification during capture

Use a meeting facilitator to validate decisions live. Role split example:

- Facilitator: highlights decisions and asks clarifying questions.

- AI: records and timestamps statements with live transcription.

- Reviewer: does post-meeting QA and tags follow-ups. This keeps sensitive calls compliant and trims post-meeting edits.

Pattern 3: Cross-file checks and visual review

After QA, run a cross-file Q&A to catch missed decisions. Use TicNote Shadow to ask across meetings and files, then surface gaps. Turn transcripts into a mind map for an executive review. The visual map helps teams spot missing links and unassigned tasks.

Quick checklist for rollout:

- Assign note owners before each meeting.

- Use templates for decisions, risks, and actions.

- Run a short reproducible test with one meeting and one human transcriber.

- Iterate on role splits and templates.

Real-world case studies & testimonials (3 short stories)

A realistic test shows how teams use AI and human notes together. Below are three brief stories about real teams that compared workflows and improved outcomes. One story even used a mini AI vs human note-taking experiment to guide rollout.

Consulting team: close action items faster

Problem: The consulting team missed follow-up steps after client workshops. They spent hours sorting emails and transcripts to find decisions.

Solution: They used TicNote Cloud to capture live transcripts, auto-summarize decisions, and add action items to a shared folder. Shadow cross-file Q&A let consultants ask past meeting files for linked tasks.

Measured benefit: Within eight weeks the team reported faster handoffs and fewer missed tasks. Average time to mark an action item done fell by roughly half, and partners said client follow-ups felt smoother.

Legal and admin: improve accuracy and compliance

Problem: A legal admin team needed reliable, auditable notes for compliance reviews. Manual notes varied by person and lacked consistent structure.

Solution: The team adopted TicNote templates and the structured transcript export. They used high-accuracy transcription for verbatim records, then applied a single reviewer to validate final notes.

Measured benefit: Review cycles shortened and error rates dropped. The legal lead said audits closed sooner because notes were uniform and easily searched across cases.

Therapy clinic: protect privacy while improving notes

Problem: Counselors wanted faster note-taking but worried about patient privacy. They needed encrypted storage and limited AI training use.

Solution: The clinic used TicNote Cloud with private-by-default storage and selective uploads. Clinicians kept sensitive audio local, used transcript uploads when consented, and used Shadow to summarize care points.

Measured benefit: Clinicians saved time on record-keeping and retained stronger client trust. Documentation quality rose, and clinicians had more time for direct care.

Step-by-step implementation guide: testing and adopting TicNote Cloud

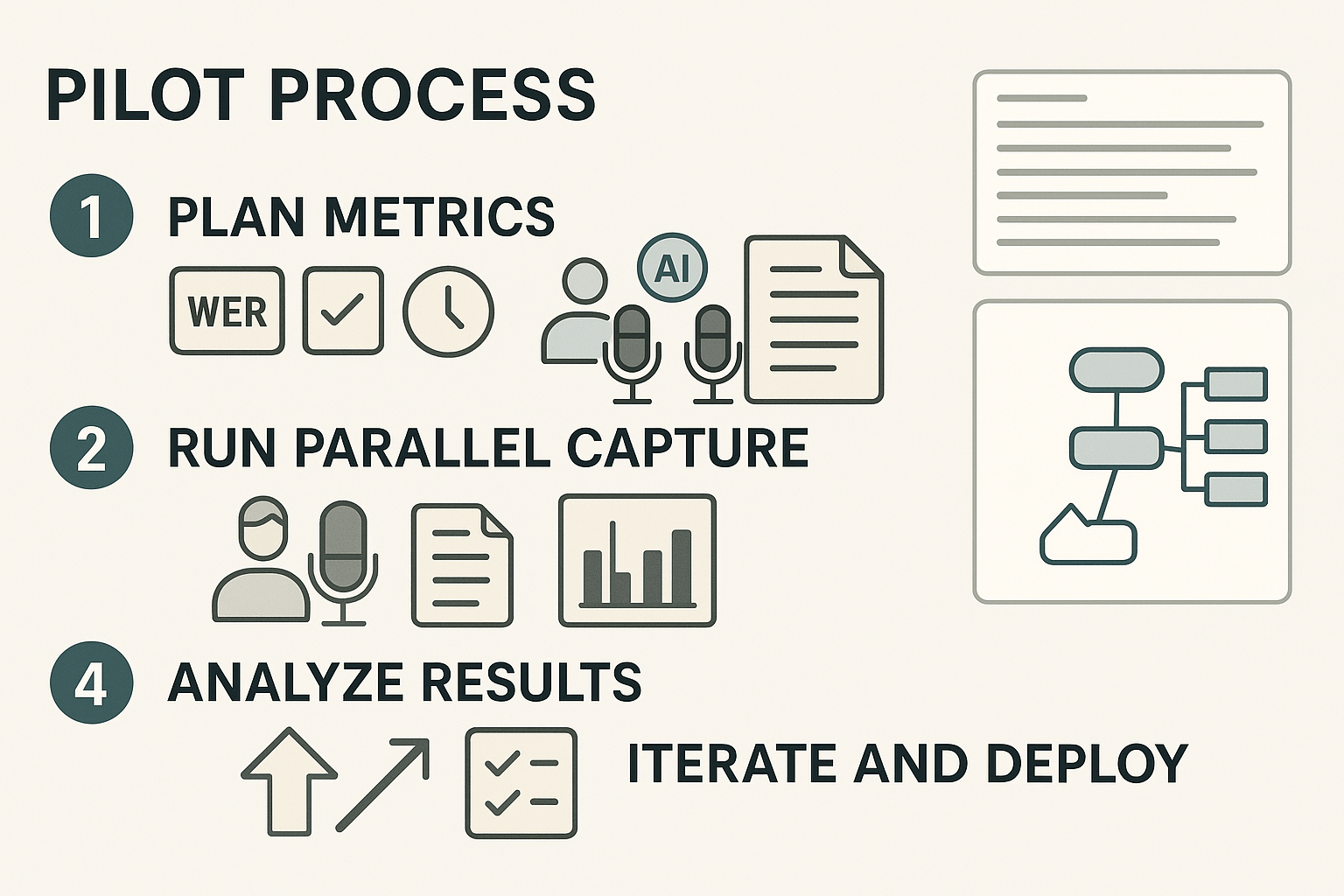

Start with a short pilot that compares AI vs human note-taking side by side. Set clear goals, recruit meeting owners, and pick 3–5 real meetings. Run the pilot for two weeks to collect enough samples for analysis.

Pilot checklist

Run these actions before you start. Create an admin account and invite pilot users. Configure recording and transcription limits for your plan. Draft a short consent note for participants and document privacy rules.

- Pick 3 meeting types: status, customer call, and decision session.

- Assign a human note-taker for each meeting.

- Enable TicNote Cloud recording and live transcription.

- Save human notes and TicNote transcripts to the same folder.

- Run Shadow cross-file Q&A after meetings to surface missed items.

What to measure

Measure accuracy, usefulness, and time savings. Use these core metrics and collect them consistently.

- Word Error Rate (WER) for transcripts.

- Action-item capture rate (percent found in notes).

- Decision recall (was the decision captured?).

- Time saved on summaries and search.

- User satisfaction (simple 1–5 survey).

Reproducible accuracy test and downloadable CSV

Run a reproducible test during two parallel captures. Have a recorder capture audio, get the human note, and export TicNote transcripts. Use the CSV template to log comparisons.

CSV columns to include: meeting_id, timestamp, speaker, reference_text, human_note, ticnote_transcript, wer, action_item_flag, decision_flag, reviewer_notes.

Steps for the test:

- Record the meeting audio and save it.

- Have a blinded reviewer compare human notes to TicNote output.

- Calculate WER and flag missed actions.

- Aggregate results across meetings and present a summary.

Training templates, integrations, and adoption aids

Provide a 30-minute onboarding script and a note-taking checklist. Integrate TicNote with Slack and Notion for follow-ups and exports. Use Shadow cross-file Q&A to find decisions across meetings. Auto-generated mind maps speed reviews and stakeholder buy-in.

Start small, measure objectively, and iterate every sprint. Use pilot data to set org-wide policies and guide roll-out.

Privacy, security, ethics & accessibility: downsides and guardrails

When evaluating AI vs human note-taking, teams worry most about data handling, consent, bias, and whether notes remain usable for everyone. These issues affect legal risk, decision traceability, and team trust. Below are the main concerns and clear guardrails security and legal reviewers can check.

Data handling and consent

Follow consent and data minimization rules. Data protection under GDPR - Your Europe states: Consent under the GDPR must be freely given, specific, informed, and unambiguous, provided through a clear affirmative action. Require meeting opt-in where needed, record consent, and document retention policies.

Technical and operational guardrails

- Private by default storage, with encryption at rest and in transit.

- Tenant controls: data locality, retention limits, and audit logs.

- Access controls: role-based permissions and Single Sign-On for enterprises.

- Human review workflows, redaction options, and export controls for sensitive items.

Ethics, bias, and accessibility

Run bias checks on summaries and automated action items. Keep humans in the loop for legal or clinical notes. Support accessibility with searchable transcripts, translated text, closed captions, and exportable mind maps (PNG, Xmind). These controls lower compliance risk and improve reuse across teams.