TL;DR: hot model Claude Opus 4.7 vs Gemini 3.1, and where TicNote Cloud fits

Use 30 credits to use Claude Opus 4.7 Premium for free every month to test the Claude Opus 4.7 vs gemini 3.1 choice in real work: Opus is stronger for agentic coding, while Gemini fits lower-cost scale and native multimodal intake. TicNote Cloud includes 30 Claude Opus 4.7 Premium requests monthly, so you can compare without another AI subscription.

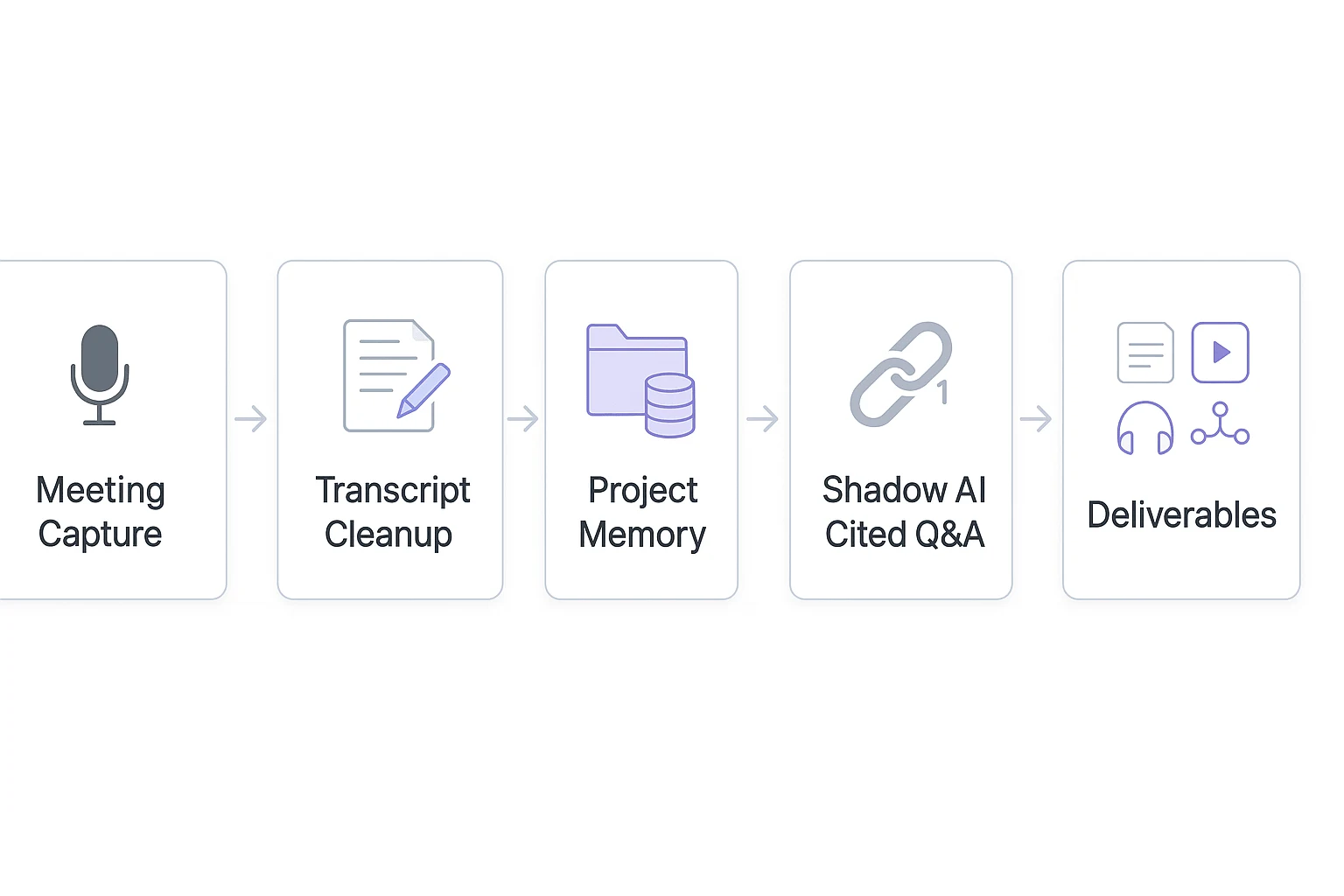

Meetings create scattered context. That slows reviews, repeats decisions, and makes model outputs hard to verify. TicNote Cloud turns transcripts and files into Project memory, cited Q&A, reports, mind maps, and reusable deliverables.

Claude Opus 4.7 vs Gemini 3.1: why this hot model comparison matters now

The Claude Opus 4.7 vs Gemini 3.1 debate is trending because teams are no longer testing models with one-off prompts. They're building agentic AI workflows: loops where a model plans, calls tools, checks results, retries failed steps, and follows guardrails before shipping an output.

The agentic AI race behind the trend

This shift changes what "best model" means. A useful agent may run 5–50 tool calls across code search, browser actions, database checks, and document review. Small reliability gaps can become real delays when each step depends on the last one.

Quick capability snapshot for busy teams

At a high level:

- Claude Opus 4.7 tends to stand out for coding-agent robustness, structured reasoning, and long-form outputs.

- Gemini 3.1 Pro tends to appeal to teams that need cost efficiency, broad multimodal ingestion, and large-scale processing.

- Long context AI helps, but it's not the same as memory. A huge input window doesn't guarantee the model can recall, cite, and reuse the right facts later.

Why model choice affects real workflows

Model trade-offs show up in deliverables, not just chat quality. Repo refactors, incident postmortems, user research synthesis, sprint planning notes, and client interview readouts all need consistent inputs, traceable sources, and repeatable outputs.

That's why benchmark wins don't automatically transfer to your stack. Prompts, evals, tools, latency, retries, governance, and permissions all matter. Teams also need a meeting-to-knowledge layer: capture, organize, cite, and ship. That layer makes AI output easier to verify, share, and reuse.

How should teams read model benchmarks without overtrusting them?

In Claude Opus 4.7 vs gemini 3.1 evals, benchmark scores are useful signals, not buying decisions. A reasoning score measures how well a model solves the test item. Agent reliability measures whether the full loop—model, tools, permissions, tests, and retries—finishes real work without breaking.

Read what each benchmark approximates

- SWE-bench: real GitHub-style software issues. It approximates bug fixing across unfamiliar repos.

- MCP Atlas: Model Context Protocol tool orchestration. It approximates planning across multiple tools and data sources.

- OSWorld: desktop and browser computer-use tasks. It approximates UI autonomy under step limits.

- ARC-AGI-2: abstract reasoning tasks. It tests pattern generalization, not production workflow skill.

- Long-context tests: retrieval and coherence over large inputs. They approximate "find it, use it, stay consistent."

Check the gap between lab and stack

Scores can shift when your tool APIs, repositories, prompt templates, eval harness, sandbox limits, or hidden scaffolding differ. For agentic coding, the base model is only one layer. Tool-loop design, test execution, patch validation, and rollback rules often decide success.

Record evals like an engineer

For every run, log model version and date, thinking level or effort, tool list, permissions, max steps, budget, temperature, prompts, and run-to-run variance. Then compare cost per success: completed tasks per dollar after retries and failed tool calls. Later, our normalized table uses scores as directional inputs, not absolute truth.

Head-to-head for real workflows: coding, agents, context, and media

In Claude Opus 4.7 vs gemini 3.1, the real question isn't "which model writes better text?" It's which one completes the job with fewer retries, cleaner tool calls, and less human repair.

Test coding by success, not style

For Claude Opus 4.7 coding, track patch success rate, test execution, dependency handling, tool-call correctness, and safe refactors. Opus often performs well on complex multi-file edits and long agent loops. Gemini can be strong when teams need fast iterations, high-throughput review, or scalable agentic AI workflows. For a deeper coding-specific method, see this agentic coding routing checklist.

| Capability | Claude Opus 4.7 | Gemini 3.1 Pro | Best-fit workload |

| Multi-file coding | Very strong | Strong | Refactors, migrations |

| Agent loops | Very strong | Strong | Tool-heavy tasks |

| Fast iteration | Strong | Very strong | Draft-test-repeat work |

| Long context AI | Strong | Very strong | Large docs, repositories |

| Multimodal intake | Strong | Very strong | PDFs, images, audio |

| Main constraint | Cost and latency | Grounding drift risk | Route by task value |

Labels are qualitative. Validate against current vendor technical docs for context windows, output limits, and multimodal support before purchase.

Treat long context as retrieval work

Long context AI means a model can read large inputs, but 1M tokens doesn't guarantee the right answer. Teams still need chunking, retrieval, grounding, and contradiction checks. For long-document review, use this mini-checklist: define chunks, retrieve exact passages, require citations, compare conflicts, then summarize.

Output ceilings also matter. Long design docs, specs, research reports, and multi-file diffs can hit truncation or lose formatting. Use staged generation: outline first, draft section by section, then verify.

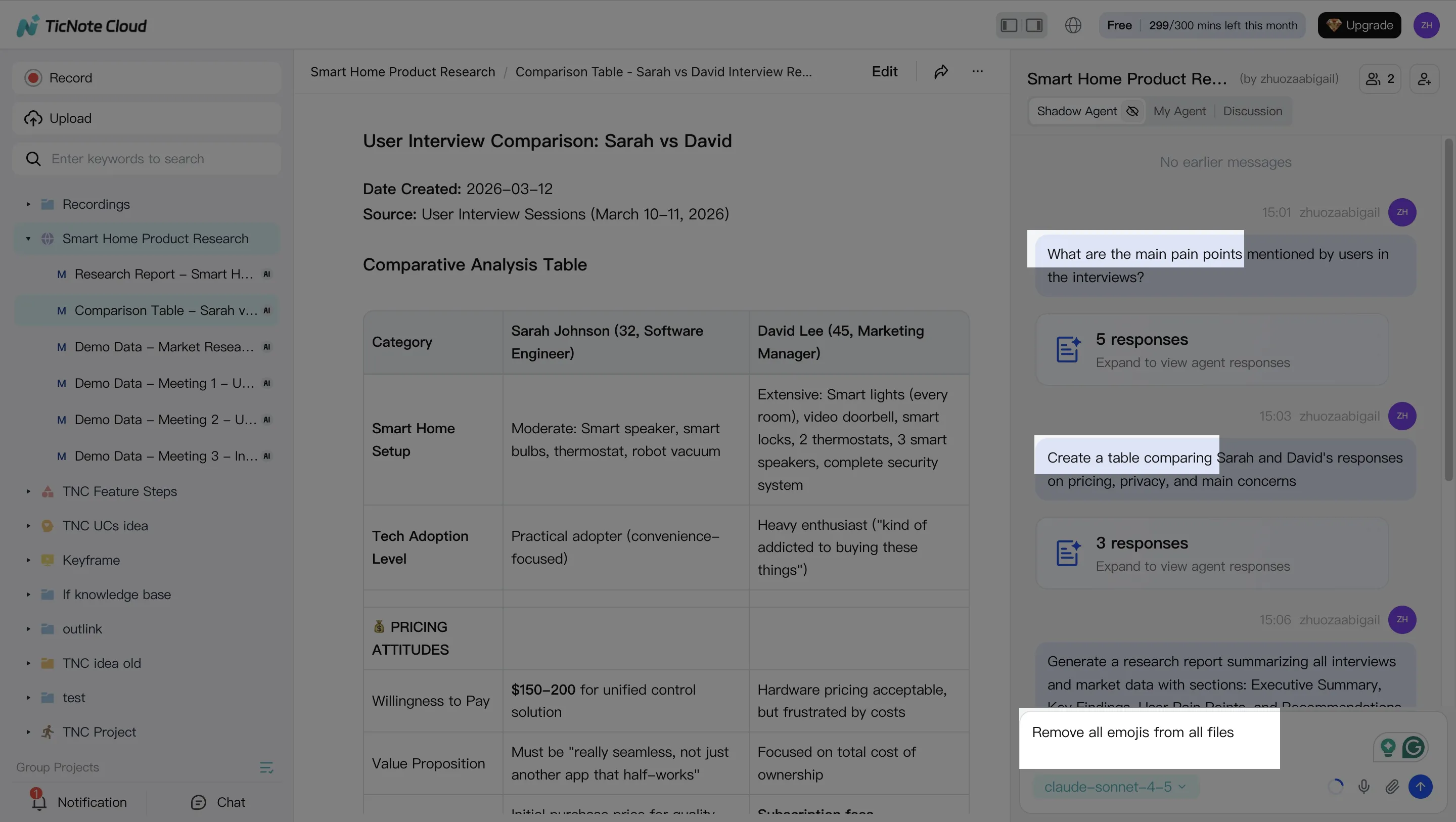

Compare native media with processed media

Native multimodal input is useful for PDFs, images, and audio. Preprocess-then-reason can be safer for meetings because transcription quality, diarization, timestamps, and speaker labels affect every downstream insight. This is where meeting transcription AI and workspaces like TicNote Cloud help: they turn recordings into editable, cited project knowledge before a frontier model writes the final deliverable.

What does total cost look like after retries, tool calls, and batch jobs?

List price is only the starting line in Claude Opus 4.7 vs gemini 3.1. Real spend is closer to: (input tokens + output tokens) × attempts + tool/runtime costs + engineer time. For a deeper forecast model, see this guide to real AI token cost planning.

Price code agents by successful task

A repo refactor agent may ingest code context, analyze dependencies, propose patches, run tests, then iterate. Costs balloon when diffs get long, tests fail, or the model loops through tool feedback 5–10 times.

Control spend with:

- Step limits per task

- Fixed token budgets

- Lower "thinking" levels for simple checks

- Human approval before broad edits

- Cached repo summaries

A stronger model can cost less overall if it solves the task in fewer attempts.

Price synthesis by cited output

For meeting research, the spike comes from long transcripts, imported docs, extracted risks, and citation checks. A 90-minute interview can create a large context window before the final report starts.

Hidden costs include queueing, batch versus real-time trade-offs, rate limits, logging, PII handling, evaluation harnesses, and regression tests. Optimize for cost per successful, reproducible deliverable, not the cheapest token.

Where TicNote Cloud fits in the Claude Opus 4.7 and Gemini 3.1 stack

In a Claude Opus 4.7 vs gemini 3.1 evaluation, the model is only one layer. Teams also need a workflow layer that keeps meeting context, decisions, sources, and follow-ups intact before any model reasons over them.

Turn meetings into structured context

Raw chats are weak memory. They lose who said what, which decision changed, and where a claim came from. TicNote Cloud fills that gap with bot-free recording, meeting transcription AI, editable transcripts, and structured extraction for:

- Decisions

- Action items

- Risks

- Open questions

- Customer quotes

That cleaned context can then support stronger prompts, better review, and faster validation. If you're testing model changes, pair this with a lightweight process to validate Claude model behavior before rolling it into production workflows.

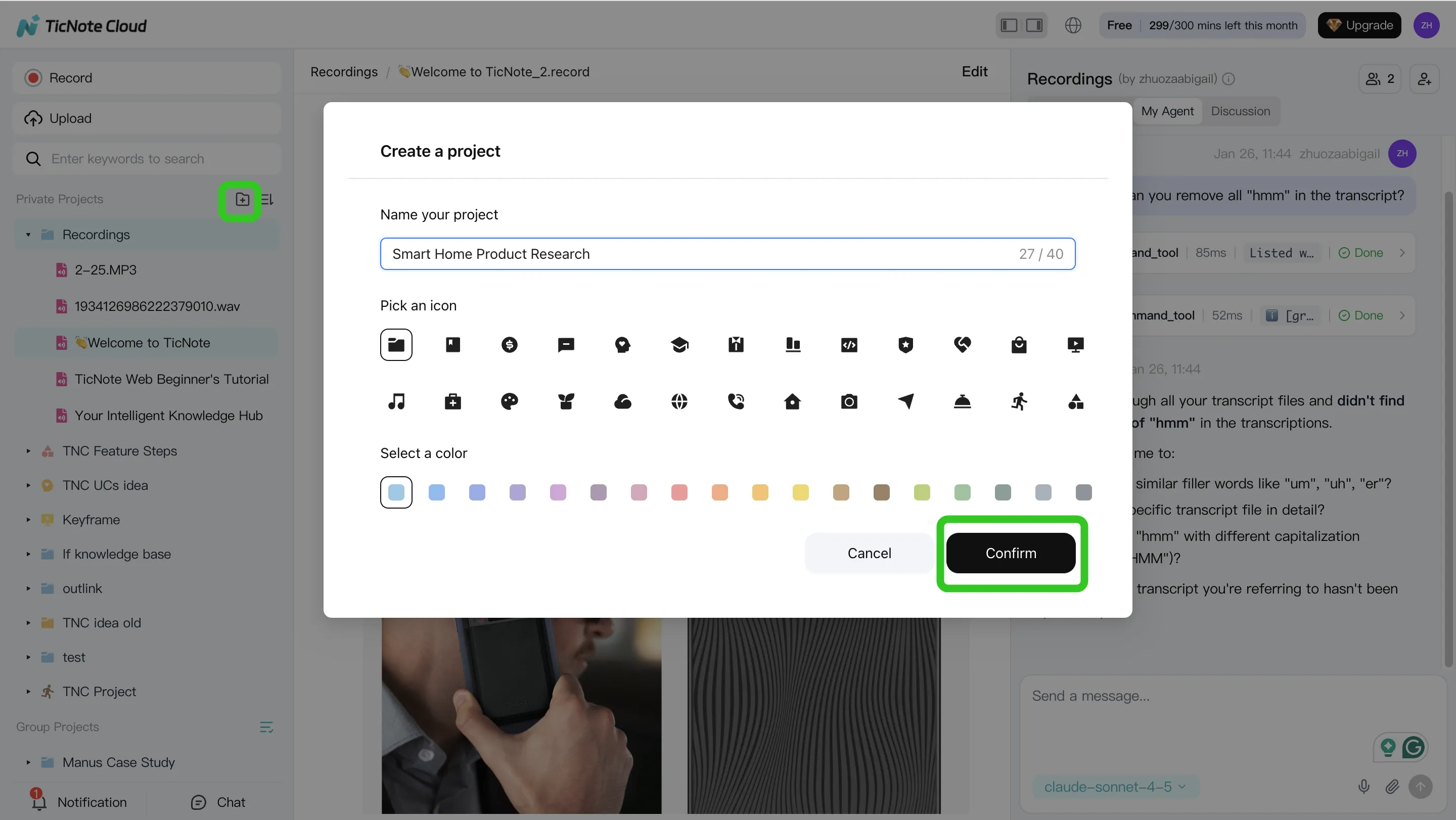

Use Projects as practical long-context memory

Projects work like scoped long-context AI memory. A team can group multiple meetings, documents, links, and research files in one place, then let knowledge build over weeks instead of starting from zero each session.

Ask Shadow AI for cited answers

Shadow AI is Project-scoped, not loose chat history. It answers across selected files, points back to clickable sources, and keeps operations traceable. For technical teams, that improves governance, reproducibility, and review.

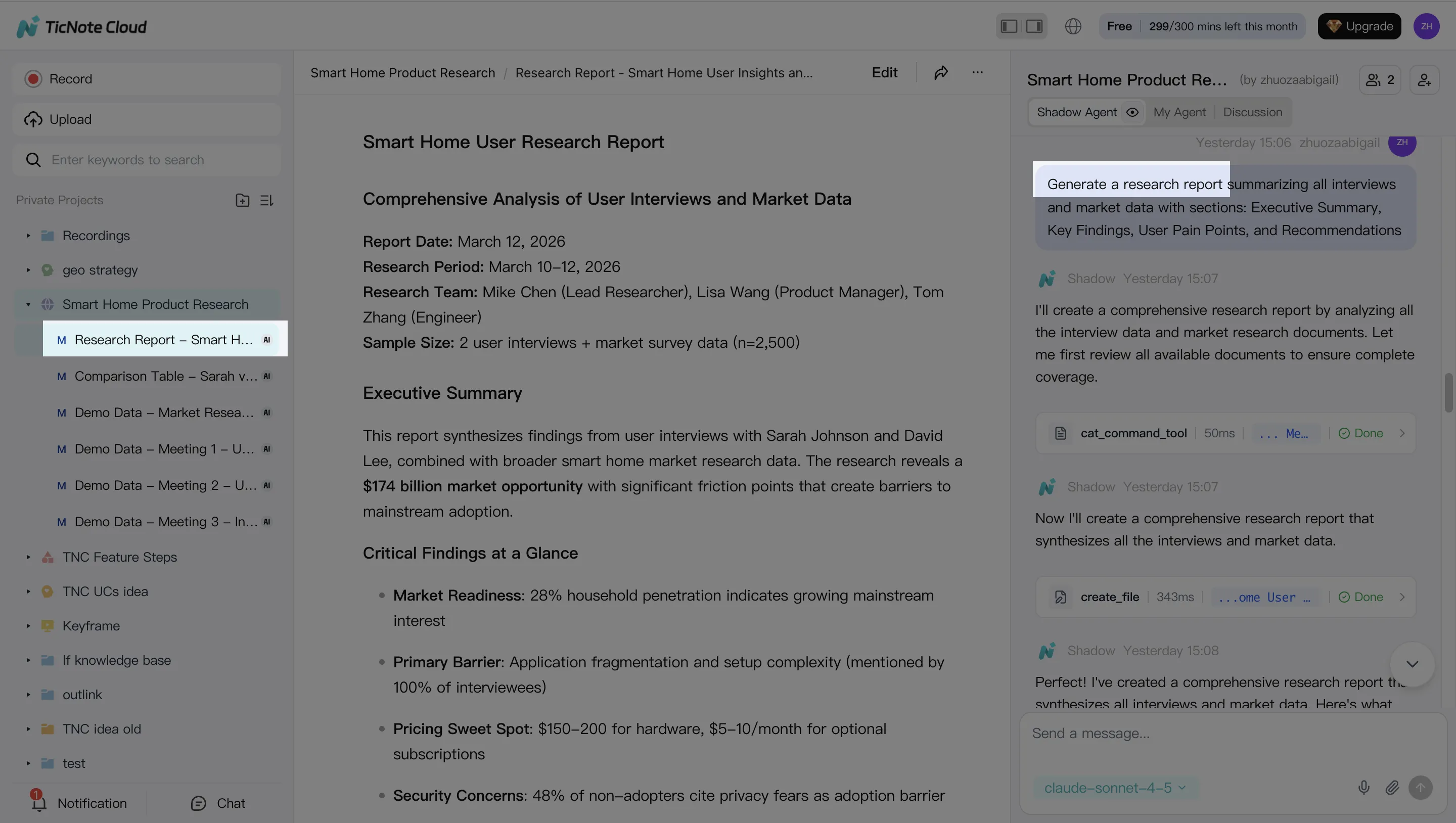

Turn context into deliverables

Use reports for user research, web presentations for clients, podcast summaries for async updates, and mind maps for sprint planning.

Get 30 credits to use Claude Opus 4.7 Premium for free every month

TicNote Cloud proprietary layer: from meetings to cited deliverables

Raw model APIs can answer prompts, but teams still need source capture, review, and repeatable output. In a Claude Opus 4.7 vs gemini 3.1 evaluation, TicNote Cloud acts as the workflow layer that keeps meetings, files, and AI edits tied to one Project.

Web workflow

- Create or open a Project, then add meeting recordings, videos, and documents. Use direct upload from the file area, or attach files in Shadow AI and ask it to save them to the right folder.

- Use Shadow AI to search, analyze, edit, and organize Project content. Ask for pain points, action items, interview themes, tables, or cleaner wording. Citations stay connected to the original timestamps and speakers, so review is fast.

- Generate deliverables from the same evidence base: a deep research report, HTML web presentation, podcast recap, mind map, or HTML page. If one section is weak, regenerate that section instead of rerunning the whole job.

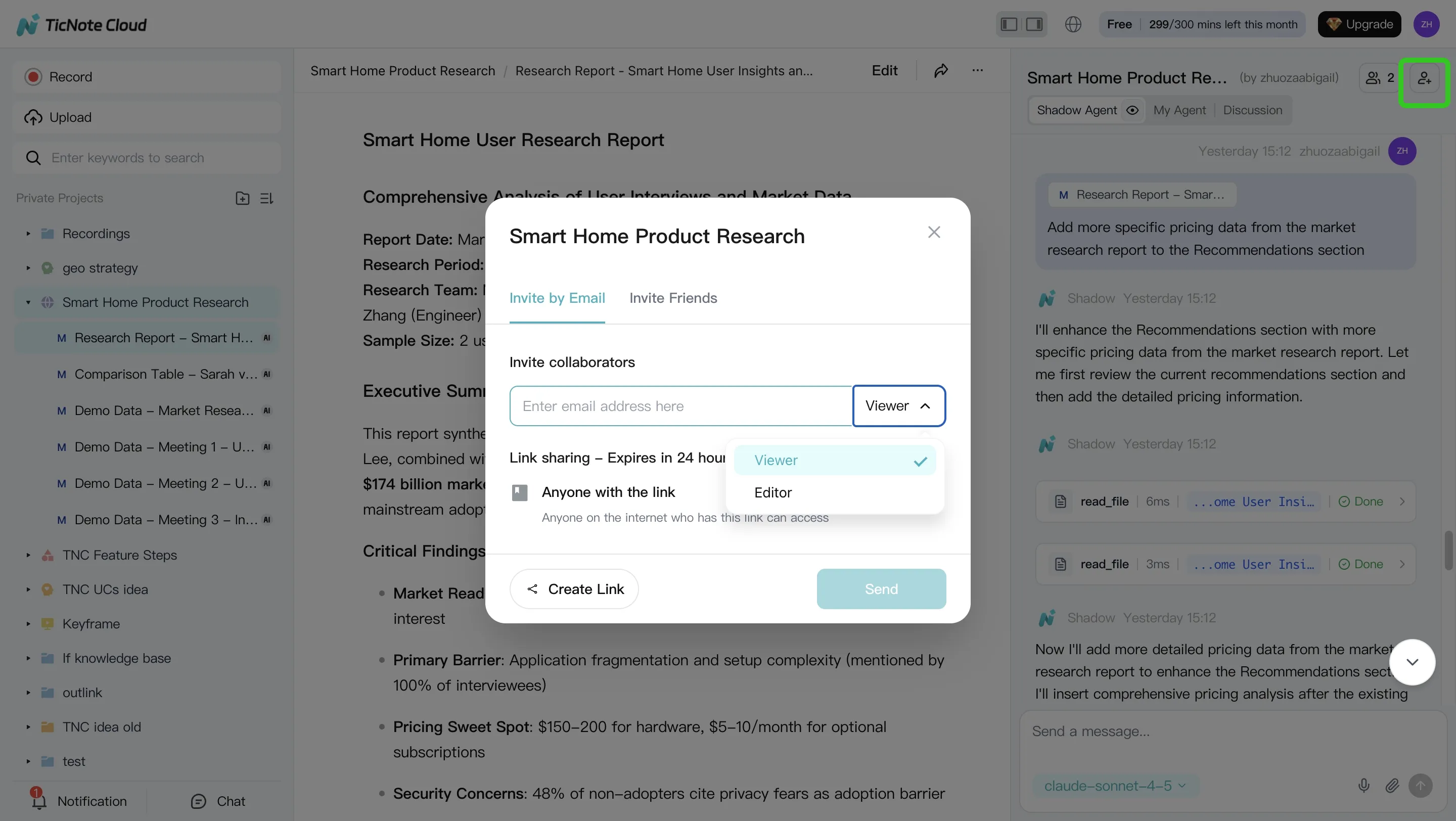

- Review the output, refine wording, assign permissions, and collaborate with comments. Click a paragraph to verify the source; Shadow AI stays inside permission boundaries and tracks operations.

App workflow

The mobile app can create Projects, import audio or video, run Shadow AI Q&A, and generate deliverables during fieldwork. Everything syncs back to the same Project for team review.

Use this for sprint planning, user interviews, client calls, and long-document review.

Get 30 credits to use Claude Opus 4.7 Premium for free every month

Decision guide: model choice by use case, scale, and constraints

In a Claude Opus 4.7 vs Gemini 3.1 decision, start with failure cost, input type, and review needs. Raw model APIs fit product features and custom automation. TicNote Cloud fits best when meetings must become cited, reusable team knowledge.

Route by workload

Choose Claude Opus 4.7 for high-stakes coding agents, complex repo changes, multi-step refactors, and long generated specs. Add budgets, rate limits, and retry caps so reliability doesn't become open-ended spend.

Choose Gemini 3.1 for lower-cost high-volume summarization, native multimodal intake, and large ingestion jobs. Batch or asynchronous processing can cut pressure on latency and unit cost.

Choose TicNote Cloud when the source is meetings. It adds Project memory, editable transcripts, cited Q&A, and one-click reports on top of whichever model your team uses.

| Dimension | Best fit | Best for | Watch point |

| Coding agent reliability | Claude Opus 4.7 | Engineering teams | Set spend limits |

| Multimodal intake | Gemini 3.1 | Media pipelines | Validate outputs |

| Long outputs | Claude Opus 4.7 | Specs and refactors | Review drafts |

| Governance/citations | TicNote Cloud | Consultants, PMs, researchers | Keep sources organized |

| Meeting-first workflow | TicNote Cloud | Cross-functional teams | Capture full context |

| Cost signals | Gemini 3.1 | High-volume teams | Use batch jobs |

| Integration constraints | Model API or TicNote Cloud | Builders or business teams | Match tool to workflow |

Use the model API where you need raw generation. Use TicNote Cloud where you need capture, memory, citations, and reusable deliverables.

Final thoughts

Claude Opus 4.7 vs gemini 3.1 isn't a trophy race. Choose by workflow, budget, latency, governance, and failure tolerance. Claude Opus 4.7 is the premium pick when agentic coding and long outputs must work on the first try. Gemini 3.1 Pro is the cost-conscious frontier model for high-volume and multimodal work.

For teams, context is the real constraint. If your "system prompt" is meeting history, use a workspace that can capture, organize, cite, and ship it. Users can get 30 credits to use Claude Opus 4.7 Premium for free every month.